Become An AI Expert In Just 5 Minutes

If you’re a decision maker at your company, you need to be on the bleeding edge of, well, everything. But before you go signing up for seminars, conferences, lunch ‘n learns, and all that jazz, just know there’s a far better (and simpler) way: Subscribing to The Deep View.

This daily newsletter condenses everything you need to know about the latest and greatest AI developments into a 5-minute read. Squeeze it into your morning coffee break and before you know it, you’ll be an expert too.

Subscribe right here. It’s totally free, wildly informative, and trusted by 600,000+ readers at Google, Meta, Microsoft, and beyond.

Hey there!

I want to make sure the content here is actually valuable to you. To do that, I need to know who's in the room! If you have a quick minute, drop your thoughts in this brief survey about your role, industry, and passions. Help me tailor this space entirely to your interests.

Today:

Claude Mythos Signals a New Era in AI Cybersecurity

AI Guardians to Shape the Future in OpenAI's New Safety Fellowship

Meta Shifts to a "Hybrid" Open-Source Strategy for Its Next-Gen Models

Anthropic Supercharges Claude with a Massive Google & Broadcom Deal

Arcee Unleashes 'Trinity' for Complex Problem-Solving

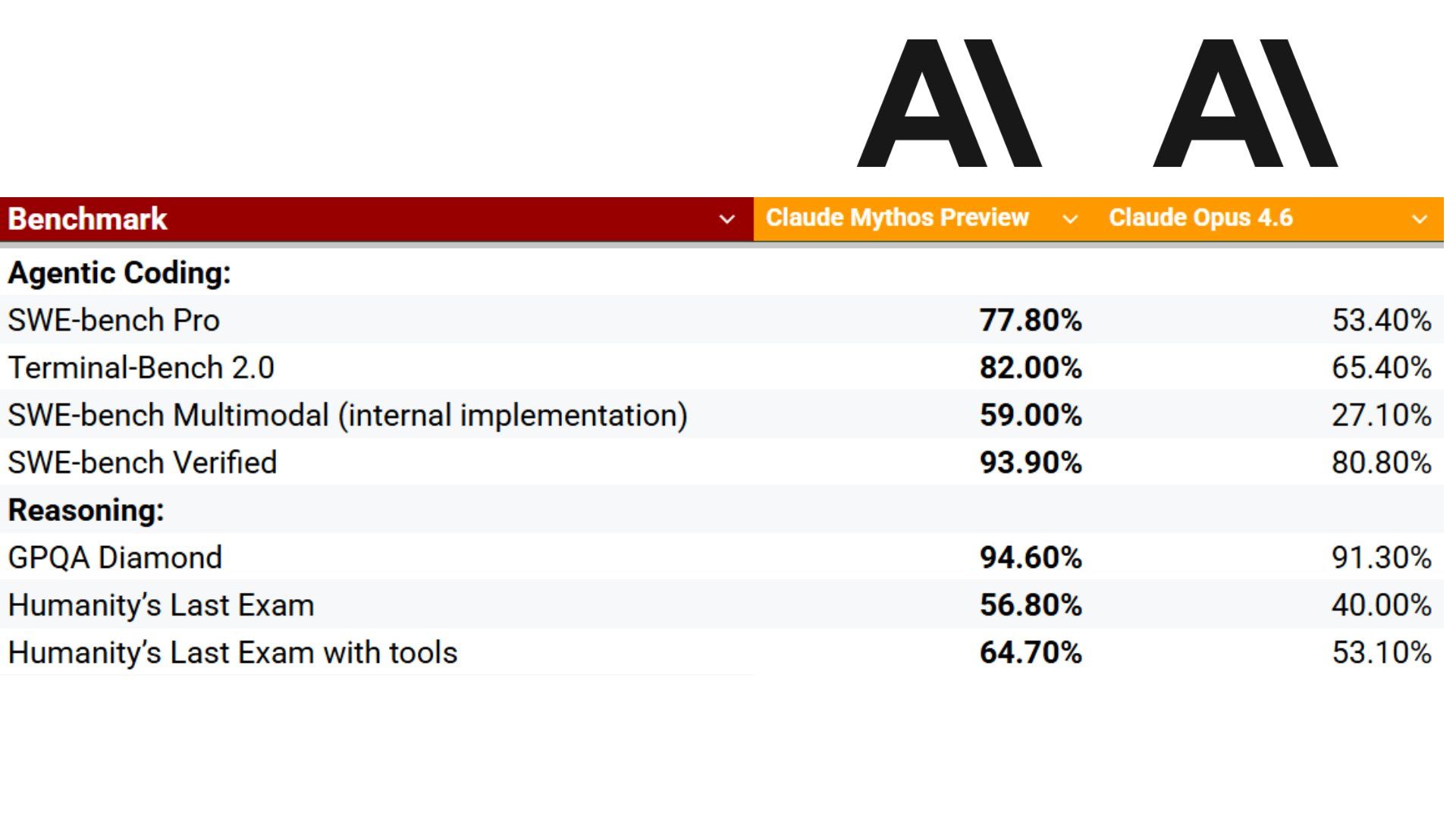

Anthropic says its unreleased model, Claude Mythos Preview, is now strong enough at finding and exploiting software bugs that it can outperform almost everyone except the very best human security experts. That is why Anthropic launched Project Glasswing at the same time, turning this model into a defensive tool before capabilities like this spread more widely.

What makes this feel different is the scale.

Mythos Preview has already found thousands of high-severity vulnerabilities, including bugs in every major operating system and every major web browser. Some of the examples are kind of wild: a 27-year-old OpenBSD bug, a 16-year-old FFmpeg bug that had survived millions of automated tests, and Linux kernel exploit chains that could escalate a normal user into full root access, which basically means complete control of the machine.

The more unsettling part is how little hand-holding it needed.

The model could often work almost entirely on its own: read code, form a theory about where the weakness might be, test it, debug it, and then produce a bug report with proof-of-concept exploit steps. In plain English, this is not just AI helping a security researcher think faster. It is AI acting more like a tireless vulnerability hunter.

That is where Glasswing comes in.

Anthropic has pulled together a pretty heavyweight group for the project, including AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks. It is also extending access to more than 40 additional organizations that maintain critical software, while committing up to $100 million in usage credits and $4 million in donations to open-source security groups. The message is clear: if these tools are about to change cyber offense, defenders need to move first and move together.

To me, this is the real headline.

Mythos Preview is the capability story, but Glasswing is the strategy story. Anthropic is basically saying we have crossed a line where advanced AI can no longer be treated as just a productivity boost for coding. It is becoming a force multiplier for cyber defense and, potentially, cyber offense too. And once that becomes true, the race is no longer just about building stronger models. It is about who uses them to harden the digital world before attackers do.

Remember in 2025 when they acquired Scale AI for $15 billion and brought Alexandr Wang on board to lead their new "Superintelligence" group? Well, we’re finally about to see the fruits of that labor.

Meta is preparing to release their next generation of AI models reportedly a language model codenamed 'Avocado' and a multimedia generator known as 'Mango'. What’s fascinating here is the strategy shift. After Llama 4 fell a bit behind the frontier edge, Meta is shifting toward a hybrid strategy.

They still plan to release open-source versions to distribute the technology broadly, but they are going to keep certain pieces proprietary to manage safety risks and maintain a competitive edge. It’s a delicate balancing act, and I’m incredibly curious to see how these new Wang-led models stack up when they officially drop.

OpenAI just announced something you might want to jump on. They are launching the OpenAI Safety Fellowship, a brand-new pilot program running from September 2026 through February 2027.

If you’re passionate about AI alignment, privacy, robust evaluations, or preventing high-severity misuse, this is a golden opportunity. The coolest part? They don’t care about fancy academic credentials—they are prioritizing actual technical judgment and execution. Fellows get a monthly stipend, compute support, and direct mentorship from the OpenAI team (plus an optional workspace at Constellation in Berkeley). Applications close on May 3rd, so if you want to help shape the safety of future frontier models, definitely get your application in.

🧠RESEARCH

Researchers introduced OpenWorldLib to organize and define "world models"—AI systems that perceive, interact with, and remember their surroundings to predict complex environments. Since a clear definition was missing, this project provides a single standard framework. It combines different AI tasks, making it easier to reuse and run models together.

Large AI models use massive amounts of computer memory when processing long chains of thought. To fix this bottleneck, researchers created TriAttention, a new method that predicts which pieces of data are most important to remember. This drastically shrinks memory usage, allowing massive AI models to run on everyday computers.

AvatarPointillist is a new tool that turns a single flat photo into a realistic, moving 3D digital avatar. It builds the character dot-by-dot, adapting the detail level based on the person's complex features. This step-by-step approach creates highly detailed, lifelike digital humans that can be easily animated and controlled.

📲SOCIAL MEDIA

🗞️MORE NEWS

Anthropic is partnering with Google and Broadcom to build a massive network of specialized AI chips. This new power will help their AI, Claude, keep up with a huge jump in users and stay at the cutting edge of technology.

Arcee AI released a massive, free-to-use software model designed specifically for complex problem-solving. It uses a unique "thinking" process to map out its steps before answering, making it highly effective at handling long conversations and taking actions across different computer programs.

Google launched a new mobile app that uses artificial intelligence to turn speech into text without needing an internet connection. This allows users to privately and reliably take notes even in places with terrible cell phone service.

A group of former OpenAI employees started a $100 million investment fund to back new technology businesses. By relying on their deep technical knowledge, they are avoiding exaggerated trends and instead funding practical physical tools like advanced factory robots.

Meta turned software testing into a game by offering the title of "Token Legend" to employees who process the most data pieces—known as tokens—using internal company tools. This friendly competition encourages the staff to push the software to its limits, helping the company find errors and improve its products much faster.